I had the opportunity to talk with Carl Bialik, aka "The Numbers Guy" at the Wall Street Journal regarding Run Expectancy and Win Expectancy in reference to the Willie Randolph non bunting decision with runners on first and second and nobody out in the bottom of the ninth inning down 3-1 in Game 7 of the NLCS. Of course instead of bunting pinch-hitter Cliff Floyd struck out, Jose Reyes lined out to center, Paul LoDuca walked, and Carlos Beltran struck out looking to send the Cardinals to the World Series.

Basically Carl is a Mets fan and wanted to explore how sabermetricians might evaluate the situation. The article is excellent but I just wanted to expand on my comments that he quotes.

The core question is whether the Mets would have had a better chance of winning had he sacrificed. As a first approximation at an answer based only on numbers we can review the matrix that Tango/Lichtman/Dolphin provided in The Book. There they show a mathematically derived version of a run outcome matrix (as opposed to one derived from actual outcomes, which evens out the probabilities you'd see for some of the situations since some of them have few actual occurrences).

In any case the matrix is calculated for a run environment of 5.0 runs per game, roughly equivalent to the game of 1994-2005. Using that matrix you see following:

Base Out RE 0 1 2 3 4 5+

12 0 1.585 35.3% 22.0% 16.2% 13.1% 7.0% 6.3%

23 1 1.451 30.2% 28.5% 22.4% 9.9% 5.3% 3.7%

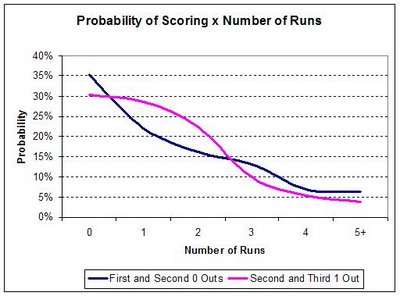

So with runners on first and second and nobody out teams would be expected to score 2 runs exactly 16.2% of the time and 2 or more runs exactly 42.3% of the time. If they had sacrificed successfully, giving up the out for the bases, the team would (with second and third and 1 out) have a 22.4% chance of scoring 2 runs and a 41.3% chance of scoring 2 or more. In other words, given those percentages you would bunt if you were playing for the tie and swing away to play for the win. As Carl noted, the case for bunting is a little stronger than I had imagined. My assumption was that the probability of scoring 2 runs once you've bunted would be closer to that with first and second and one out indicating that the bunt would not be preferred.

I've graphed this table so you can see how the lines cross with the probability of scoring various numbers of runs.

But of course even if you were playing for a tie you'd have to execute the sacrifice first and overall that's about a 75% proposition (including those times when the defense doesn't get any outs which accounts for about 15% of the successful attempts).

But...

In this case the Mets were down by two runs in the bottom of the ninth

and so rather than use Run Expectancy one can instead use Win Expectancy (WX) which Carl also talks about. He quotes BP's own Keith Woolner who created a mathematical model for WX. So using the same run environment of 5.0 runs per game (I'm using WX values generated by Dave Studeman using Woolner's work), the Mets would have been expected to win 21.6% of the time with runners on first and second, nobody out and 2 runs down. With a successful sacrifice their odds of winning go up to 29.4%. That makes the break-even percentage for the sacrifice at only 53.6% since if they fail their odds drop to 12.6% (assuming the failed sac leaves the runners on first and second with 1 out).

So like I said, it's a closer call than I had suspected. But had Randolph elected to bunt who would have done it? Clearly the Mets would have called on Chris Woodward or Anderson Hernandez to lay one down but I think Randolph (like most people including me) would rather put the game in the hands of a proven hitter in Floyd. Also you have to consider that Adam Wainwright throwing 95 with that big curve is difficult to bunt on as well and the fact that the Cardinals would have been all over that bunt attempt which drives down the success percentage, actually making it more attractive for the pinch hitter to then swing away - game theory in action. In all I think Randolph made the right choice and it's hard to argue against his logic.

Thing brings to mind other famous strategic decisions from post seasons past...

The most recent one that comes to mind is Dave Robert's steal of second

in game 4 of the 2004 ALCS. There the Sox were trailing 4-3 when Roberts pinch ran for Millar with nobody out. In that situation with a run environment of 3.6 runs (roughly equivalent to the deadball era thereby accounting for the fact that Mariano Rivera was in the game) the WX for the Sox stood at 31.1%. If Roberts steals successfully, then the WX goes up to 41.9% but if he fails it goes down to 9.1%

The break-even percentage (the needed success rate of the stolen base

to make it a break even play) can then be calculated as:

BE = (.311 - .091) / (.419 - .091)

Or .670, 67%

In other words, if Roberts felt that he had at least a two-thirds shot at making it, then it was a good gamble. Apparently he did (we'll pretend anyway) and the rest is history.

The other one that most folks will think of is Babe Ruth getting caught stealing to end the 1926 World Series against the Cardinals. In that situation Ruth walked with two outs in the bottom of the ninth and his team down 3-2. In that situation they had (using the Yanks 1926 run environment) a 9.5% chance of winning. Ruth of course was caught stealing but if he had been successful their odds would have gone up to 14.6%. As a result Ruth's break even percentage was 65.4%. Ruth's career stolen base percentage was around 50%. Ruth was of course thrown out easily with slugger Bob Meusel at the plate and so in that situation his odds may have been much smaller than 65% making it a poor play.

125x125_10off+copy.jpg)